Technology Is Not An End But Means To Make Customer Life Easier: Manu Saale

- By 0

- February 04, 2020

Mercedes-Benz R&D India (MBRDI), founded in 1996 in Bengaluru to support Daimler’s research, IT and product development activities, is now one of the largest global R&D centres outside Germany, employing close to 5000 skilled engineers and a valuable centre to all business units and brands of Daimler worldwide. The centre is also a key entity for Daimler’s future mobility solutions through C.A.S.E (Connected, Autonomous, Shared and Electric) for building autonomous and electric vehicles. The centre’s competencies in engineering and IT have progressed to using AI, AR, Big Data Analytics and other modern technologies to provide seamless connectivity. During an interaction with T Murrali, the Managing Director and CEO of MBRDI, Manu Saale, said, “The centre has been growing phenomenally. We have just started a team on cyber security. . . We have been helping to simulate some stack- related solutions using fuel cells. I’m waiting for a clear strategy from the company for a possible venture into the hydrogen path.” Edited excerpts:

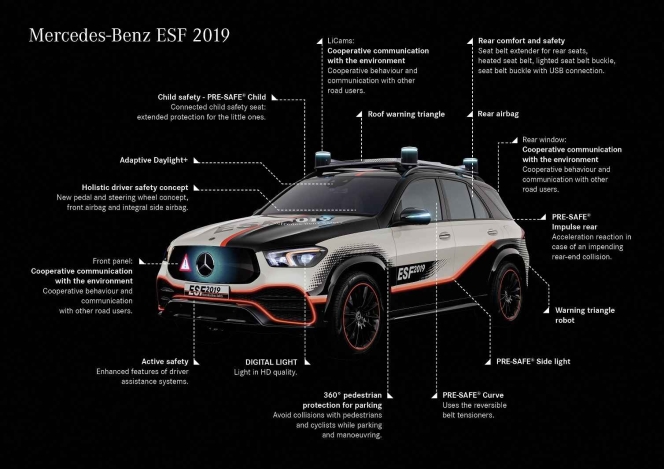

Q: You could begin with detailing the contribution of MBRDI to the Experimental Safety Vehicle (ESF)?

Saale: The ESF is a concept vehicle. We have taken a GLE platform and tried to predict technologies that are coming up and put its demo version inside. Some of them are just future technologies but they are strictly based on the data we have collected, and the accident research and digital trends that we have seen.

There is a worldwide safety theme, centred in Germany and India, which is studying all these data and statistics to predict how the future should look like. Mercedes-Benz has a history of building concept cars as mobility is changing around us. This time we have decided to put safety in perspective for the new age mobility with ESF2019. This time we have decided to put safety in perspective for the new age mobility.

For example, in a driverless car there is no steering wheel, so where will you put the air bags as it has been placed in the steering wheel. This means that the airbag concept will have to change. If you go white-boarding on this topic you will realise that some fundamental things you have been counting on all these years will change. This international team in Bengaluru supporting Germany has been working on many of these kind of concepts.

We have brought it here for two reasons. One is for the contribution from India. A lot of digital simulations have been done before implementing the hardware. Bengaluru has contributed to the digital evaluation of the new safety concepts in ESF. The other reason is to inspire the engineers to innovate further based on the first level of fantasies that we have created, and how it could be taken to the next level. These are the kind of things we want our engineers to think about; ESF is a pointer in that direction.

Q: What are the possible changes with the emergence of EVs and autonomous vehicles for safety?

Saale: Imagine not being able to predict the position of passengers when a crash happens. If they are sitting in a conference mode, facing one another other, how can they be protected without an airbag in their front? That’s one; second is the use of different materials within the car and the dynamics that could happen in an accident. Third is connection to the source of a fuel tank / pack, not specific to one place but probably spread across the floor of a car. The battery and its chemical components are also critical in a crash situation.

There are many new things when we think about safety in autonomous and electric vehicles; whereas connectivity plays into our hands. I don’t think the industry has exhaustively thought about what new dimensions can come from driving autonomous vehicles.

Q: What happens if the accident is so severe that all the electrical connections are cut off? Has any thought gone into this?

Saale: I am sure they have thought about it. An airbag can pop up in milliseconds; an SOS is message placed post crash. Today, in an instant, we can ping the world somehow, so information of position, latitude, etc is sent out immediately when an accident takes place. Of course it depends a lot on the emergency services and collision response in the country.

Q: What is the role played by MBRDI in the development of Artificial Intelligence (AI) and Augmented Reality (AR)?

Saale: This is the new age digital; we don’t have to go back to the old world of software alone. Digital has shown new potential in the last few years and we have tried to keep pace with the current trends. AI is certainly one of the buzz words that is coming up.

MBUX, which we flagged off in Bengaluru a few weeks ago, showcases how AI could be used as a technology to make customer life easier in the car. We look at all the use cases to find out what the customer does in a car.

For example, use of camera in a car. During night driving if the driver extends his hand to the vacant seat next to him looking for something, and if it is dark, the camera will sense that he is seeking something and switch on the lights. We need AI for that because we have to understand the hand position and the amount of stretch done; it should not be confused with the driver stretching himself after yawning. Such a simple use case requires a lot of technology. These are things where people look at customer behaviour and say ‘technology is not for the sake of technology but to make customer life easier.’

Q: The Tier-1 companies spread across Germany have come up with many futuristic solutions for vehicles. They have their own research centres. So what is the role of R&D centres of OEMs like this other than integration?

Saale: Every centre has to ride its own destiny. Even if we are a GIC we cannot expect HQ to hold our hand for ever. It’s a typical parent-child relationship and not a customer-supplier one. We have seen all the combinations of GICs working out there in the market. I think we have a good success story here. That is the value-add GIC has to think about.

A survey was done on the value-add from GICs; they used the word entrepreneurship from GICs. It was found that only 6 percent of GICs were entrepreneurial, that were really able to innovate. We were also named in that top 6 percent. It depends on the company culture, relationships, handling discussions with HQ and the local leadership teams. That’s the challenge in a GIC compared to a profit centre that is looking from one customer to another.

Q: You are also in touch with suppliers in India and across the globe for necessary hand-holding?

Saale: Absolutely, imagine a situation where the parents trust the child completely.

Q: You will be the parent and Tier-1s the children?

Saale: No, it is not that way. We behave as Daimler when we talk to Tier-1s. We tell them that ‘you know the car well, so do it by yourself and deliver the product.’ That’s the level of maturity in interaction that one can reach.

Q: When it comes to electronics, OEMs the world over are faced with many regulations. Do you see options for them to comply with all the regulations considering the amount of electronics coming into the car?

Saale: Every new thing is a technical challenge on the table. It can be stricter emission norms or features and functionalities that are difficult to reach, a technical compliance issue that crops up every now and then, and a safety or parking aspect that is covered by many regulations around the world. We thrive on such challenges that have pushed a company like Mercedes to keep on inventing because, among many other things, hardware is getting cheaper and smaller, software capabilities are growing, connectivity is increasing, computing external to the car is possible, and so many other things. OEMs are dealing with authorities, trying to handle what is possible at lower cost, because at the end of the day we have to sell. I am sure that regulators and societies around the world today are looking for some balance between technology and cost.

Q: How do you manage multiple sensors in the vehicle?

Saale: Digital appears to be very complex now but electronics will go through its life cycle and come to a point where man understands its complexity and is able to put it all together. Today, we are talking about sensor fusion - putting together the net of information and seeing it as a whole through various sensors.

Functionalities could range from a switch to radar or lidar with their spectrum of signals, to give various resolutions; the processing capability would be in milliseconds. The more we comprehend the mixed bag of signals we get the better will be our ability to make right decisions.

Q: With all the facilities that you provide to the driver, are you not actually deskilling him?

Saale: The trend is that people don’t want to get into the hassles of driving a vehicle. Driving is stressful and cumbersome to many which is why the autonomous car would gain popularity. The driver has to just punch in where he/she has to go and the vehicle will do it automatically, saving both mental and physical tension. A completely new user base is being introduced into mobility with software features. We have to look at it positively.

Q: Are you also working on cyber security, on things that get into the car?

Saale: We have just started a team now. Our focus on cyber security is at a centre in Tel Avi, Israel.

Q: Do you see scope to improve the thermal efficiency of Internal Combustion (IC) engines further?

Saale: I think the capability, from an engineering perspective, exists to take the IC engine to the next level. The potential continues to be there and all OEMs talk about it. Possibly it is getting affected by the social and environmental aspects.

Q: It is said that the exhaust from a Euro-6 engine is far better than the atmospheric air in many highly polluted cities and it is not actually polluting. What is your opinion?

Saale: It is true. But people say if electricity is generated from coal then aren’t we contributing to pollution? If we localise electric production to one area with everything contained then it would give us better scope to control it rather than spewing it out of every vehicle tail-pipe in all over the world.

Imagine millions of polluting vehicles moving around compared to millions of electric, which don’t have any tail-pipe emissions, with electricity generated by coal that is centralised; it would be a completely different technical and logistic challenge from the environmental point of view. Regulators, politicians and policy makers are all giving their views on this issue; the improvement in living standards and the coming up of smart cities would affect it. I think we are moving in the right direction with the greening of the environment covering everything. I see this sustainable city living much better pictured with electric moving around me.

Q: Can you tell us about the work done around IoT?

Saale: We are working on digitalisation of our production in many ways. One of the teams for Manufacturing Engineering in Bengaluru focuses on digital methods in manufacturing such as production planning, supply chain, logistics and IoT. The team also works on front-loading of production planning.

Q: What is your contribution to the Sprinter F-CELL, the fuel cell application, that replaced the diesel engine?

Saale: We have been helping to simulate some stack- related solutions using fuel cells. I’m waiting for a clear strategy from the company for a possible venture into the hydrogen path. (MT)

- Lauritz Knudsen Electrical and Automation

- Orion Racing India

- Formula Student

- K J Somaiya School of Engineering

- Naresh Kumar

- Dr. Ukrande

Lauritz Knudsen Partners With Orion Racing India To Support Engineering Talent

- By MT Bureau

- March 17, 2026

Lauritz Knudsen Electrical and Automation has entered into a partnership with Orion Racing India, the Formula Student team of K J Somaiya School of Engineering, Mumbai.

The collaboration is intended to support engineering students at the grassroots level and strengthen the development of electric mobility capabilities within India.

The partnership focuses on hands-on learning and experimentation in the design of electric and autonomous vehicle platforms. Lauritz Knudsen aims to foster skills in power distribution systems and electric vehicle charging infrastructure, areas central to the company’s industrial focus.

Orion Racing India has operated in student motorsport for nearly 20 years, transitioning from internal combustion engines to electric prototypes in 2019. The team uses electric race cars as a platform for students to address challenges in – energy management, power systems, vehicle safety and performance engineering.

Naresh Kumar, COO, Lauritz Knudsen Electrical and Automation, said, “India’s electric mobility journey will be shaped by the ecosystem we build today. At Lauritz Knudsen, we believe meaningful change begins early, when young engineers are encouraged to build, experiment, and apply their learning to challenges. By engaging with students who are actively working on electric vehicle technologies, we are helping develop future ready talent that will play a defining role in India’s mobility and energy future.”

Dr. Ukrande, Director of K J Somaiya School of Engineering, added, “Orion Racing India has a long and proud legacy of representing K J Somaiya School of Engineering at Formula Student competitions over the years. What makes this journey special is the continuity each batch of students builds on the learning, experience, and spirit of those before them. Through hands-on work on electric racecars, our students move beyond textbooks to real engineering challenges. Support from industry partners like Lauritz Knudsen further strengthens this learning ecosystem and motivates students to innovate in areas critical to India’s mobility future.”

Horse Powertrain Launches kAIros AI Initiative To Accelerate Manufacturing

- By MT Bureau

- March 17, 2026

Horse Powertrain has announced kAIros, a company-wide artificial intelligence (AI) initiative led by its Horse Technologies division. The programme aims to reduce time-to-market by nearly 50 percent, decrease low-value process work by 40 percent and improve design cycle efficiency by 25 percent.

The initiative is supported by Nvidia, Google Cloud and Deloitte, focussing on engineering, production and business operations.

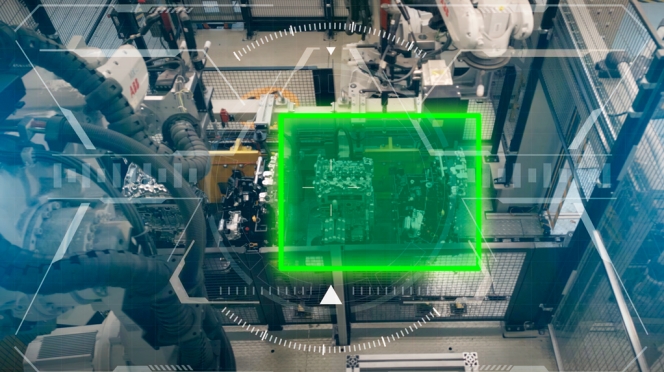

At the core of the initiative is the Horse Powertrain AI Factory, which supports model training, simulations and digital twins. The infrastructure is designed to generate training data to refine models and improve real-world deployment.

The technical framework includes:

- Nvidia RTX PRO servers equipped with Blackwell Server Edition GPUs.

- Google Cloud NVIDIA RTX 6000 Blackwell Server Edition GPUs.

- Nvidia AI software, including CUDA-X, Omniverse and Cosmos, to accelerate application development.

- Google Gemini Enterprise for the deployment of AI agents to automate coordination tasks.

The kAIros initiative supports physical AI, connecting real-world operations with virtual systems in real time. This integration enables autonomous decision-making for cobots, automated guided vehicles and smart machinery. Key applications include video-based quality inspection, product simulation and robotics for process optimisation across factories and logistics.

A Centre of Excellence has been established to lead internal AI development. This multifunctional team will build applications to scale industrial expertise across the organisation and improve predictive accuracy in propulsion solutions.

NXP And Nvidia Collaborate On Integrated Robotics Solutions For Physical AI

- By MT Bureau

- March 17, 2026

NXP Semiconductors has announced a series of robotics solutions designed for real-time data processing, sensor fusion and motor control. Developed in collaboration with Nvidia, these ready-to-deploy systems implement the Nvidia Holoscan Sensor Bridge with NXP’s system-on-chip (SoC) technology to reduce component count, power consumption and costs in robotic development.

The solutions focus on Physical AI, which requires low-latency data transport to synchronise motion and sensor data. By integrating the Holoscan Sensor Bridge into NXP's software, developers can establish a direct transport route between a robot's body and its central processing unit.

The architecture incorporates several NXP technologies:

- i.MX 95 Applications Processor: A machine vision solution designed to deliver high-bandwidth data to the robot brain.

- i.MX RT1180 Crossover MCUs: A motor control solution based on a kinematic chain.

- S32J TSN Switch: Aggregates motor control data and provides direct connectivity to the brain using Time-Sensitive Networking (TSN) and EtherCAT protocols.

- Asymmetric Data Transport: Technology acquired through Aviva Links to manage high-throughput data across the robot body.

The unified architecture is designed to support humanoid form factors, which require complex motor synchronisation and real-time perception. NXP’s automotive-grade networking and functional safety expertise are used to ensure the reliability of these systems in physical environments.

Charles Dachs, Executive Vice-President and General Manager, Secure Connected Edge at NXP Semiconductors, said, “Physical AI is redefining what machines can do in the real world, and humanoid robots represent the most complex expression of that revolution. By combining NXP’s deep expertise in edge processing, secure networking, functional safety and real-time control with Nvidia robotics platforms, we are greatly simplifying physical AI development, enabling seamless connectivity between the physical AI edge and the central brain. This is just the beginning of what NXP will deliver to accelerate the ecosystem for physical AI.”

Deepu Talla, Vice-President of Robotics and Edge AI, Nvidia, commented, “The development of autonomous machines requires a high-performance computing architecture that can synchronize complex motor controls with real-time perception. By integrating Nvidia Holoscan Sensor Bridge into its edge portfolio, NXP is providing developers with a scalable foundation to accelerate the deployment of physical AI.”

- TIER IV

- Autoware

- SoC

- Level 4 Autonomous

- University of Tokyo

- Carnegie Mellon University

- Hyundai IONIQ 5

- Toyota JPN TAXI

- Technical University of Munich

- Volkswagen T7 Multivan

- Shinpei Kato

- Yang Zhang

- Yutaka Matsuo

TIER IV Launches Data-Centric AI Software Stacks For Level 4 Autonomous Driving

- By MT Bureau

- March 17, 2026

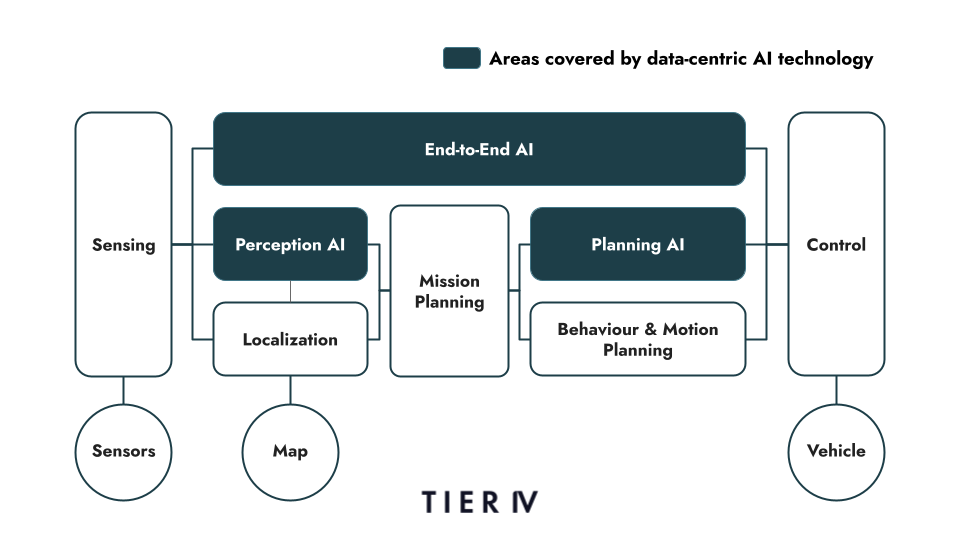

Tokyo-headquartered deep-tech company TIER IV has announced that it has developed new software stacks for Level 4 autonomous driving powered by data-centric artificial intelligence. The software is available via Autoware, an open-source platform, and is designed to be hardware-agnostic, supporting various system-on-chip (SoC) and sensor configurations.

The software stacks are built on an end-to-end (E2E) architecture and offer two primary configurations to allow adaptability across diverse driving environments:

- Hybrid System: Utilises perception and planning AI. It employs diffusion models to capture temporal changes in surroundings and generates trajectories by combining machine learning models with environment perception.

- E2E System: Integrates perception, planning, and control into a single learning process. It uses world models to treat surroundings and driving status as vector representations, creating a pipeline from recognition to vehicle operation.

Automakers can use TIER IV’s machine learning operations (MLOps) platform to iterate AI models. The platform manages data-quality validation, anonymisation and tagging, while generating synthetic and real-world datasets for system evaluation.

TIER IV has commenced 60-minute test runs in three global hubs to validate the technology under distinct traffic conditions:

- Tokyo: Collaborating with the University of Tokyo using a Toyota JPN TAXI to evaluate urban hub-to-hub travel.

- Pittsburgh: Partnering with Carnegie Mellon University using a Hyundai IONIQ 5 for robotaxi tests between Pittsburgh International Airport and the university.

- Munich: Working with the Technical University of Munich using a Volkswagen T7 Multivan for safety evaluations in European urban scenarios.

While safety drivers remain on board to comply with local regulations, no manual intervention is expected during normal operation.

Shinpei Kato, Founder and CEO, TIER IV, said, “To achieve Level 4+ autonomy, we need technology that evolves autonomously alongside the environments it serves. Our new data-centric AI models and collaborative MLOps platform provide a common language and a shared foundation for the entire industry. By working with research institutions, industry leaders and the development community to advance autonomous driving technology through Autoware, we are creating an open, transparent environment that fosters continuous, collective innovation for the benefit of society.”

Yang Zhang, Chairman, Autoware Foundation’s Board of Directors, said, “Autoware serves as the global foundation where researchers, corporations and developers collaborate to advance autonomous driving software. Our collaboration with TIER IV strengthens the international framework for validating and refining E2E autonomous driving through real-world deployment. By testing across three continents, we are driving standards-based innovation and expanding an open ecosystem that lowers the barrier for a diverse range of partners to join and contribute.”

Yutaka Matsuo, Professor at the University of Tokyo, added, “The release of these software stacks and MLOps platform is a vital step toward deploying advanced AI models in industrial applications. By accumulating data from Japan’s distinctive traffic environments through our Tokyo testing and contributing those insights back to Autoware, we aim to further bridge the gap between academic research and real-world deployment.”

Comments (0)

ADD COMMENT