BorgWarner To Display Advanced eMobility Solutions At Bharat Mobility Global Expo 2025

- By MT Bureau

- January 13, 2025

BorgWarner is all set to display its latest developments in electrified drivetrain solutions at the Bharat Mobility Global Expo 2025 in New Delhi.

At Booth M5 in Hall H2 at the Components Show in Yashobhoomi, Dwarka, New Delhi, BorgWarner will present its LFP battery systems, which are built with a sturdy and modular architecture based on state-of-the-art blade cell technology from FinDreams Battery. The various pack sizes are perfect for a variety of off-road applications and all electric commercial vehicles, including trucks and buses. These batteries, which come with the newest in-house, future-proof technology and software, help to increase vehicle range, dependability and safety performance. Additionally, BorgWarner will showcase its advanced turbocharging solutions, eMotors, high-voltage coolant heaters, integrated drive modules and next-generation inverters, highlighting its extensive range that serves advanced combustion, hybrid, and electrification requirements.

Dr Stefan Demmerle, Vice President, BorgWarner Inc and President and General Manager, PowerDrive Systems, said, “Our participation in the Bharat Mobility Global Expo marks a significant opportunity to showcase our pioneering eMobility technologies. With our high-energy LFP batteries and advanced power electronics as well as thermal management solutions, we are committed to supporting India’s rapid move towards cleaner, more efficient mobility solutions. Our global expertise and local presence enable us to be a key partner in this transformation.”

Mercedes-Benz Commences Mass Production Of Axial Flux Motors At Historic Berlin Plant

- By MT Bureau

- June 10, 2026

German luxury carmaker Mercedes-Benz has officially launched large-scale series production of its new high-performance electric axial flux motor at its Berlin-Marienfelde facility.

Founded in 1902, the company’s oldest active manufacturing site is being transformed into a global centre of excellence for high-performance electric motor fabrication.

The compact, high-power-density drive system is making its commercial production debut on the front and rear axles of the new all-electric Mercedes-AMG GT 4-Door Coupe.

Bringing axial flux technology to automotive mass production required overcoming steep engineering barriers. The manufacturing footprint spans approximately 30,000 square meters across three production halls, utilising seven highly automated assembly lines.

The production workflow comprises 98 distinct process steps, including 65 deployed for the first time by Mercedes-Benz and 35 entirely new to the global manufacturing sector. These industrial innovations have generated more than 30 patent applications.

The axial flux motor, rather than using traditional round wire, uses rectangular copper wire to pack more conductive material into a tight space, boosting power density. Mercedes-Benz co-developed a high-speed bending process to shape the wire at tight radii without pinching, wrinkling or breaking the insulation coating.

The coil ends are connected to adjacent wires via ultra-precise laser welding. This delivers minimal, highly localised thermal input to prevent heat damage to surrounding plastic components.

Furthermore, drivetrain plastic parts undergo simultaneous laser transmission welding. To prevent geometric inaccuracies, an AI-driven optical system tracks component placement in real time, locks virtual protection zones over sensitive areas and verifies seal integrity instantly.

During final assembly, the stator is structurally integrated between two heavy, magnet-loaded rotor discs. The line manages massive magnetic pull forces of up to 9 kN (approx. 900 kg), keeping the stator perfectly balanced within the magnetic centre plane under a tight tolerance of less than 0.1 millimetres using micro-frequency control pulses.

The current motor design builds on early prototype architectures from British electric motor specialist YASA, which became a wholly-owned subsidiary of Mercedes-Benz in 2021.

Michael Schiebe, Member of the Board of Management, Mercedes-Benz Group AG (Production, Quality & Supply Chain), said, “With the start of large‑scale series production of the axial flux motor in Berlin‑Marienfelde, we are bringing a pioneering innovation for electromobility into industrial reality. In doing so, we are sending a strong signal of technological leadership, operational excellence and the transformation of the automotive industry in Germany."

Patrick Schnieder, German Federal Minister of Transport, noted, “Mastering the demanding axial flux technology is a major opportunity for the German and European automotive industry. This innovative electric motor helps establish a strong foothold in the premium segment. The start of production of the axial flux motor in Berlin-Marienfelde sends a powerful signal about Germany’s strength as an industrial location. With Mercedes-Benz’s axial flux motor, electromobility gains additional momentum. A decisive factor in the continued success of e-mobility is the availability of charging infrastructure. Through our Charging Infrastructure Master Plan 2030, we support both the considerable commitment of the charging infrastructure industry and the efforts of the automotive industry.”

NXP Unveils SAF8444 Single-Chip Radar SoC To Drive Affordable ADAS Adoption

- By MT Bureau

- June 09, 2026

NXP Semiconductors has introduced the SAF8444, an automotive radar system-on-chip designed to enable advanced driver assistance systems (ADAS) processing on the sensor itself.

Manufactured using 28-nanometre RFCMOS technology, the single-chip solution operates across the 76–81 GHz automotive radar band to support short-, medium- and long-range sensing. The chip is intended for vehicle platforms, including electric vehicles, where it reduces system costs by simplifying thermal management and vehicle integration.

The system addresses entry-level and economy vehicle lines by integrating hardware components to lower overall bill-of-materials costs. It combines an Arm Cortex-A53 applications processor, an Arm Cortex-M7 real-time core, and NXP’s proprietary Signal Processing Toolbox radar accelerator with digital signal processor support. This architecture allows perception-level processing to occur directly on the radar sensor, reducing the data-load reliance on centralised vehicle compute resources.

The technology is optimised for standard automated safety functions, including adaptive cruise control, autonomous emergency braking, blind-spot detection and park assist. To meet safety criteria such as the Euro NCAP 2030 requirements, which include low-light pedestrian detection, the chip fuses camera and radar data.

Additionally, it features a dual-threaded accelerator to run anti-jamming algorithms and mitigate radio frequency interference in congested environments.

NXP supports the device with an enablement ecosystem that includes radar software development kits, safety frameworks, security components, power management integrated circuits, and networking solutions.

Meindert van den Beld, Senior Vice-President and General Manager, Radar & ADAS, NXP Semiconductors, said, “SAF8444 strengthens our one-chip radar portfolio with a solution that balances performance, power efficiency, and cost. It allows customers to meet tightening safety requirements while reducing system cost—an essential step toward democratizing ADAS adoption.”

Bosch Introduces Third-Generation SiC Chips In India To Scale EV Efficiency

- By MT Bureau

- June 09, 2026

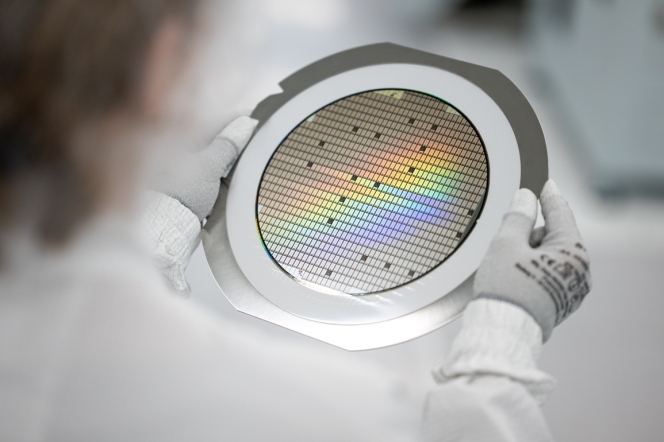

German technology company Bosch has officially introduced its third-generation Silicon Carbide (SiC) semiconductors to the Indian market. The strategic rollout targets the next phase of India's electric vehicle (EV) expansion, shifting the market focus from early adoption toward cost efficiency, longer ranges, and mass-market scaling.

Silicon carbide technology has become a cornerstone of modern EV drivetrains, acting as the primary control mechanism for energy flow within the power electronics system – specifically the inverter. By optimising the conversion of direct current (DC) from the battery into alternating current (AC) for the electric motor, SiC chips directly dictate a vehicle's overall electrical efficiency.

The Gen 3 SiC chips bring several structural and performance improvements over legacy silicon and previous-generation components by delivering around 20 percent higher performance, enabling electric vehicles to achieve extended driving ranges without requiring automakers to increase physical battery pack sizes.

The SiC chips are manufactured using an advanced substrate, which reduces switching energy losses and improves thermal performance. This allows for less complex, more lightweight cooling architectures within the engine bay.

Enhanced miniaturisation allows Bosch to harvest more individual chips per semiconductor wafer, lowering manufacturing cost barriers and making advanced power electronics financially viable for mass-market budget EVs, two-wheelers and commercial fleets.

To date, Bosch has delivered more than 60 million SiC chips worldwide. The multinational engineering firm continues to funnel billions of euros into expanding its global semiconductor fabrication plants to reinforce supply line resilience against global automotive chip shortages.

By introducing the third-generation lineup locally, Bosch aims to establish an end-to-end semiconductor ecosystem in India, backing the government's localized advanced manufacturing and vehicle electrification goals.

Sandeep Nelamangala, Joint Managing Director, Bosch and President of Bosch Mobility India, said, “Our advanced SiC technology is designed to deliver the tangible benefits that Indian consumers demand - longer driving range, faster charging, and lower long-term costs. By making high-efficiency power electronics more accessible, we are helping to unlock the full potential of the EV market, making clean, efficient mobility a reality for everyone in India."

Markus Heyn, Member of the Bosch Board of Management, and Chairman, Bosch Mobility business sector, said, “Our ambition is clear: we want to be a globally leading manufacturer of SiC chips. With our next generation SiC chips, we are helping our customers put even more powerful and efficient electric vehicles onto the road.”

BYD Showcases DM-i Electric-First Hybrid Technology In India

- By MT Bureau

- June 09, 2026

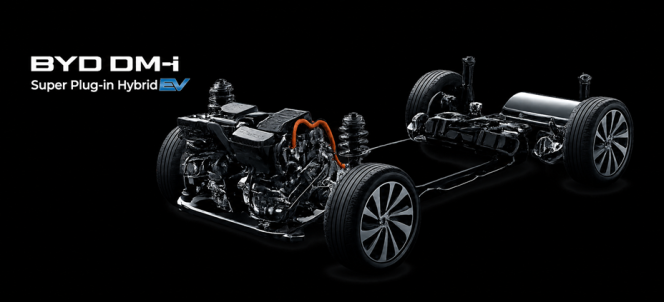

BYD India, a subsidiary of the world’s largest New Energy Vehicle (NEV) manufacturer, has showcased its DM-i (Dual Mode Intelligent) plug-in hybrid technology in India. Positioned as a transitional bridge between internal combustion engines and pure battery electric vehicles (BEVs), the incoming powertrain technology targets long-haul efficiency with a combined cruising range exceeding 1,200 km.

With a global plug-in hybrid history starting with the F3DM in 2008, BYD has amassed over 8 million cumulative PHEV sales, capturing a 35 percent global market share in the segment. The technology's introduction in India is intended to expand BYD's domestic portfolio beyond its current pure-EV lineup, which serves a growing base of 14,000 customers via 48 showrooms across 40 cities.

Unlike conventional hybrids that rely on a petrol engine as the primary mover with electric motors acting as secondary support, BYD's DM-i architecture reverses this layout to operate as an Electric-First system.

The vehicle relies primarily on battery power across everyday urban commutes. The petrol engine operates secondary to propulsion, working as a silent generator to maintain battery state-of-charge or engaging directly only during high-load, high-speed scenarios.

The system manages energy distribution via three intelligent operating modes:

- EV Mode: The vehicle relies entirely on the electric motor and battery pack, mimicking a standard BEV for zero-emission city driving.

- HEV ‘Series’ Mode: For mid-range driving, the onboard engine acts strictly as a generator, supplying electricity to charge the battery while the electric motor continues to turn the wheels.

- HEV ‘Parallel’ Mode: Under heavy acceleration or high-speed cruising, the petrol engine mechanically couples to the drivetrain, providing direct propulsion to the wheels alongside the electric motor.

The DM-i platform pairs advanced electric motor hardware with a highly specialised internal combustion engine optimised for thermal cycling:

- Xiaoyun 1.5L Engine: The platform utilises a dedicated 1.5-litre naturally aspirated petrol engine that achieves an industry-leading thermal efficiency of 43.04 percent.

- Super Hybrid Blade Battery: Power is stored in a specialised iteration of BYD's proprietary Lithium Iron Phosphate (LFP) Blade Battery, engineered for structural safety, puncture resistance and high thermal stability.

- Fuel Economy: Under standard test conditions, the powertrain achieves a low consumption rate of 4.8-litre per 100 km (approximately 20.8 kmpl).

- Acceleration: The Electric Hybrid System (EHS) delivers seamless, single-speed acceleration, enabling a zero to 100 kmph sprint time of under 5.5 seconds in its high-performance configurations.

Initially entering India in 2007 to build electric buses and commercial chassis, BYD India has scaled its passenger vehicle presence with models including the e6, Atto 3, Seal, eMax 7 and Sealion 7. The company supports its local assembly operations through two manufacturing facilities spanning over 140,000 square meters, representing an investment of more than USD 200 million.

Rajeev Chauhan, Head of the Electric Passenger Vehicles Business, BYD India, said, "The introduction of DM-i technology marks a pivotal step in our commitment to making sustainable mobility more versatile and accessible for Indian consumers. By enabling electric-first driving for daily use while seamlessly supporting long-distance travel, DM-i addresses some of the most pressing barriers to the adoption of sustainable motoring in India. With this innovation, we are bringing a new technology to Indian consumers, and also shaping a smarter, more flexible pathway towards sustainable transportation."

Comments (0)

ADD COMMENT